Chronicles of the Future – 21 June 2024

Artificial intelligence, climate change and demographics

Artificial intelligence

AI warfare

The Economist has a good leader on the use of AI in war. There are three main takeaways:

1) A lot of the focus on military AI is on autonomous weapons (drones, etc). But we should pay equal attention to other uses of military AI, including command and control, and decision support systems that help commanders, with the eventual goal of blended “human-machine teams”. The key question being: how is AI integrated into decision making processes?

2) For many years, the fear was that China had big advantages in military AI. That seems to have abated, with the US ahead in frontier models and computational power, and China moving more cautiously than people thought it might. They cite this new paper, which argues that China is proving more hesitant to deploy “unproven” technology in war.

3) And finally, they see a widening intellectual gap between those enthusiastic about the increasing role of AI in war, and those worried about the lethal & ethical implications.

A year and a half until PhD-level AI

Mira Murati, CTO at OpenAI, gave an interview where she said she expects AI to operate at “PhD level intelligence in certain tasks” in “a year and a half”. Murati describes the trajectory of ChatGPT, from v.3 operating at “toddler level intelligence”, to the latest v.4 at that of a “smart high schooler”.

These comments were lapped up by Leopold Aschenbrenner, who’s series of essays I recently reviewed, who saw it as support of his thesis that AI technology will develop far quicker than almost anyone is predicting.

The trouble is, Murati has a clear incentive to be bullish about AI. It builds the hype-train around OpenAI, making it easier for it to raise the colossal sums it anticipates it will need to build the necessary GPUs to scale (Sam Altman said $7 trillion in February). Aschenbrenner, too, is clearly wants a role in the public-private collaboration on superintelligence he claims is inevitable.

Where are the realistic predictions on AI development, that don’t come from those with vested interests?

Leftists against automation

Entrepreneur and investor Balaji Srinivasan gave an interview at a conference where he said that AI is taking the jobs of Democrat voters – “lawyers, doctors, artists, writers” – and that they are therefore trying to restrict the adoption of AI, and therefore limit potential economic benefits.

There is some truth to this. The jobs of white collar workers, who increasingly skew left across the West, do stand to be disrupted by AI. And we’ve already seen some of these workers revolt (see the Hollywood writers’ strike).

But I think Srinivasan’s comments lack historical context for who has opposed automation in the past. For all of time, it has been left-wing groups. From the Luddites to trade unions, workers groups have pushed back against technological advances that they think could do them out of jobs.

The difference, this time, is who these left wingers are. Before, they relied on numbers and sympathy. Mass walkouts on the production line and tales of poverty. But now, they’re richer, better educated and more powerful. The movement will work differently.

Climate change

Court says no

The UK Supreme Court has ruled that Surrey County Council should have considered the climate impact of burning oil drilled from new wells in Horley (rather than just the environmental impact of the operations on the site), in landmark ruling which could end all future UK oil drilling.

For me, this is a more interesting ruling than the European Court of Human Rights’ recent wranglings about emissions in Switzerland (which seemed incomprehensible). The judge concluded that the downstream emissions of the oil drilled from the well should be included in any calculations because such emissions are “inevitable” and “straightforwardly results of this project.”

The inevitability of downstream emissions is persuasive. What else would happen with the oil? However, this does move UK regulation much closer to ‘Scope 3’ emissions, the broadest definition of net zero whereby all emissions in the supply chain are included in calculations. This isn’t currently mandated in other industries, so I would have liked to see an explanation of why oil is exceptional. And then there’s my constant gripe with decisions like this being made in courts, rather than by politicians.

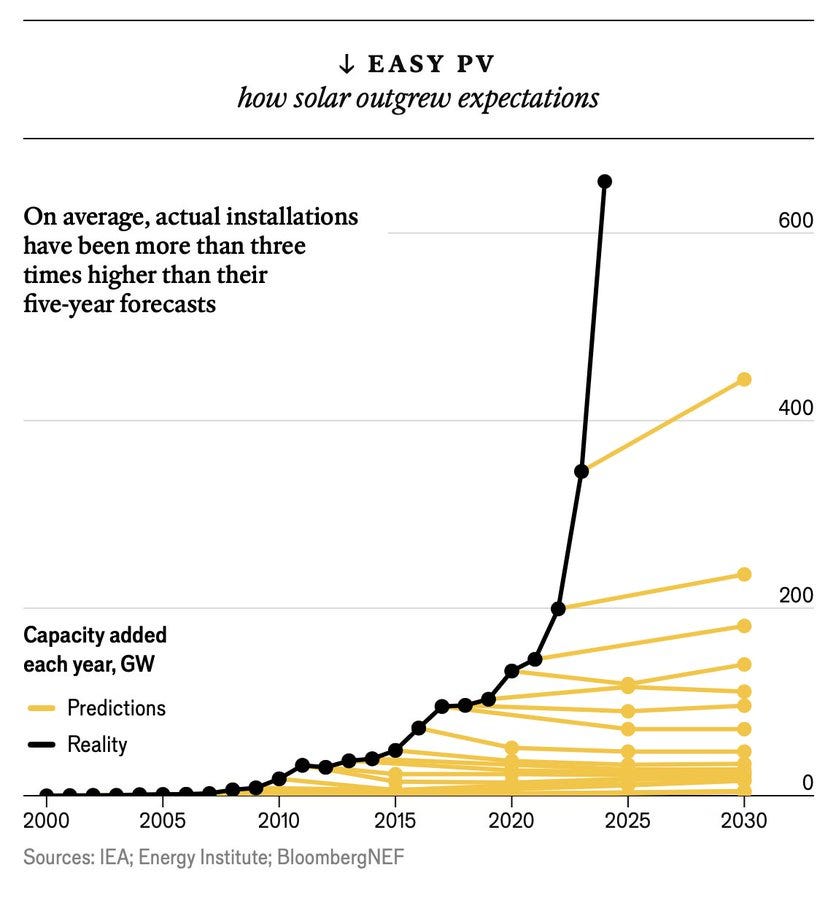

Solar reaches for the sky

This week’s newsletter is turning out to be slightly Economist themed, because another of their leaders this week (it was a good edition!) highlights how solar energy is growing exponentially.

Here’s the graph that tells the story.

Demographics

The quiet return of eugenics

Louise Perry has a fabulous long-read in the Spectator about the re-emergence of eugenics. The summary is that new science (polygenic screening) will soon make ‘designer babies’ a reality, prompting all kinds of ethical questions.

We can already screen embryos for all kinds of health vulnerabilities (heart disease, diabetes, cancer), as well as their likely physical and psychological traits: height, hair colour, athletic ability, conscientiousness, altruism, intelligence. This exists for around £10,000 and will only become cheaper and more effective.

Other forms of eugenics are much more common. Most people now screen for Down’s Syndrome, while bans on cousin marriage are based more on the impact on future children rather than moralism. We might not use the ‘e’ word, but that’s what it is.

Perry is uncomfortable with these developments. Noting eugenics dark history, she’s concerned about where this leads us. Will this mean the rich can game the genetic lottery (or ‘Darwin their way out of this mess’ – an Easter egg for my family!)? If some genes are deemed superior to others, does that mean some people are too? And what about the possibility of, as Jonathan Anomaly speculated in his 2020 book, Creating Future People, a group becoming so genetically distinct that they’re unable to interbreed with the rest of the population?

The answers to these questions aren’t obvious. What I do hope, however, is that their difficulty doesn’t cloud our judgement for the easier ones. If we can cheaply screen against terrible diseases, and perhaps in the future when such services are offered by the state, it should be mandatory for parents to choose embryos without the vulnerability. Parents shouldn’t have the right to potentially inflict disease and suffering on their unborn children when there are no downsides in avoiding it.

And on that cheery note… have a great weekend, all!